Securing Agentic AI: Navigating Identity & Access in the Machine Era

The AI revolution is moving beyond simple chatbots and entering the era of Agentic AI. Businesses are rapidly adopting AI agents that can act independently or on behalf of human users to solve complex problems and drive efficiency.

But with this innovation comes a critical question: How do we secure them? Over the last few months, we have met with more than 100 CISOs, security leaders, and Identity and Access Management (IAM) leaders to gather their perspectives on Agentic AI security. The consensus is clear: organizations are struggling to keep up, and security teams are deeply concerned about the risks posed by the rapid adoption of AI agents across business units.

The 4 Major Security Challenges of Agentic AI

Security leaders consistently pointed to four major hurdles they need to overcome to gain control::

- Visibility and "Shadow AI": Organizations don't know how many agents are currently being used. Questions remain about who the active users are, what use cases they serve, and where these agents are created—whether on personal devices, dedicated agent platforms, or elsewhere.

- Business Risk and Data Leakage: What applications, resources, and data do these agents have access to? There is a significant concern about data leakage, specifically about how much proprietary enterprise data is being fed into public LLMs. Leaders are looking for ways to block public LLM access and enforce the use of secure enterprise LLMs.

- Defining Policy Controls: Security teams need the ability to define strict policy controls—dictating exactly what an agent can access, and conversely, who is authorized to access and use a specific agent.

- Detecting Anomalous Behavior: Traditional threat detection is built for humans and standard software. Teams need new ways to monitor and detect anomalous, potentially malicious, agent behavior.

These concerns are consistent with the OWASP Top 10 for Agentic Applications.

The Two Faces of AI Agents: Deployment Models & Risks

Securing AI agents isn't a one-size-fits-all endeavor. There are two distinct deployment models for agents, and each comes with entirely different security and policy control requirements:

1. The Autonomous Agent (The Digital Workforce)

These agents perform independent functions. The most common example is an AI support gent that autonomously handles L1 and L2 support cases, only escalating to human L3 agents when necessary.

To function, this agent needs access to case management systems, documentation, and various other applications.

- The Security Challenge: A unique service account (Non-Human Identity or NHI) must be created in each application the agent interacts with. It is critical that these NHIs are granted only the required privileges. For example, data write access should be granted only when strictly necessary. Unlike a human user who generally sticks to their workflow, if you grant an AI agent broad access, it will natively explore and try to use all the access it has, which can lead to disastrous outcomes.

2. The On-Behalf Agent (The Co-Worker / Co-Pilot)

In this model, a human user leverages an agent to assist in their daily work. The agent uses the human user’s credentials to access applications such as Salesforce, Snowflake, or Slack. The user logs in, generates OAuth tokens for these apps, and grants them to the agent.

- The Security Challenge 1: The "Mirror" Problem (Over-Privilege): Because the agent is acting on behalf of the user, it is technically limited to the scope of that user's permissions. In theory, this provides clear guardrails. However, in most enterprises, the reality is that human users are drastically over-privileged. While ethical human users generally stick to their established workflows, an AI agent will actively explore the boundaries of those privileges to solve a problem—finding and utilizing "accidental" access that the human may not even know they have.

- Security Challenge 2: Credential Persistence & Lifecycle Gap Agents require secrets (NHI keys or API tokens) to maintain these "on-behalf-of" connections. These secrets are often long-lived and manually managed.

- The Risk: If these keys are hardcoded or stored in insecure vaults to avoid "breaking" the agent, they become permanent backdoors.

- The Lifecycle Problem: When a project ends, or an agent is "retired," the keys often remain active. Organizations struggle to rotate or revoke these keys because they fear disrupting the agent’s complex logic or causing downtime.

3. The Hybrid Agent (The Multi-Modal Workflow)

In complex enterprise environments, agents often operate in a hybrid capacity, combining autonomous capabilities with delegated human authority to complete end-to-end business processes.

A hybrid agent uses its own NHI identity to access core systems (like a database or CRM) while simultaneously using human tokens to act on behalf of a user in communication or productivity tools (like email or Slack).

- The Security Challenge 1: The Identity "Mashup": Because these agents juggle both their own service accounts and user-delegated tokens, they often fall into a "governance gap." Security teams struggle to determine if an action was performed by the agent's core identity or via the user's delegated session, making it nearly impossible to enforce a consistent security policy or baseline.

- The Security Challenge 2: Agentic Cascading: Hybrid agents often trigger "sub-agents" to complete specialized tasks. This creates a chain of delegated authority—a cascading effect—where the original human prompt ripples through multiple layers of identities and permissions. If one agent in the chain is compromised or over-privileged, the entire workflow becomes a high-speed conduit for unauthorized data access or lateral movement that is invisible to traditional monitoring tools.

The Path Forward: Securing the Agentic Workforce

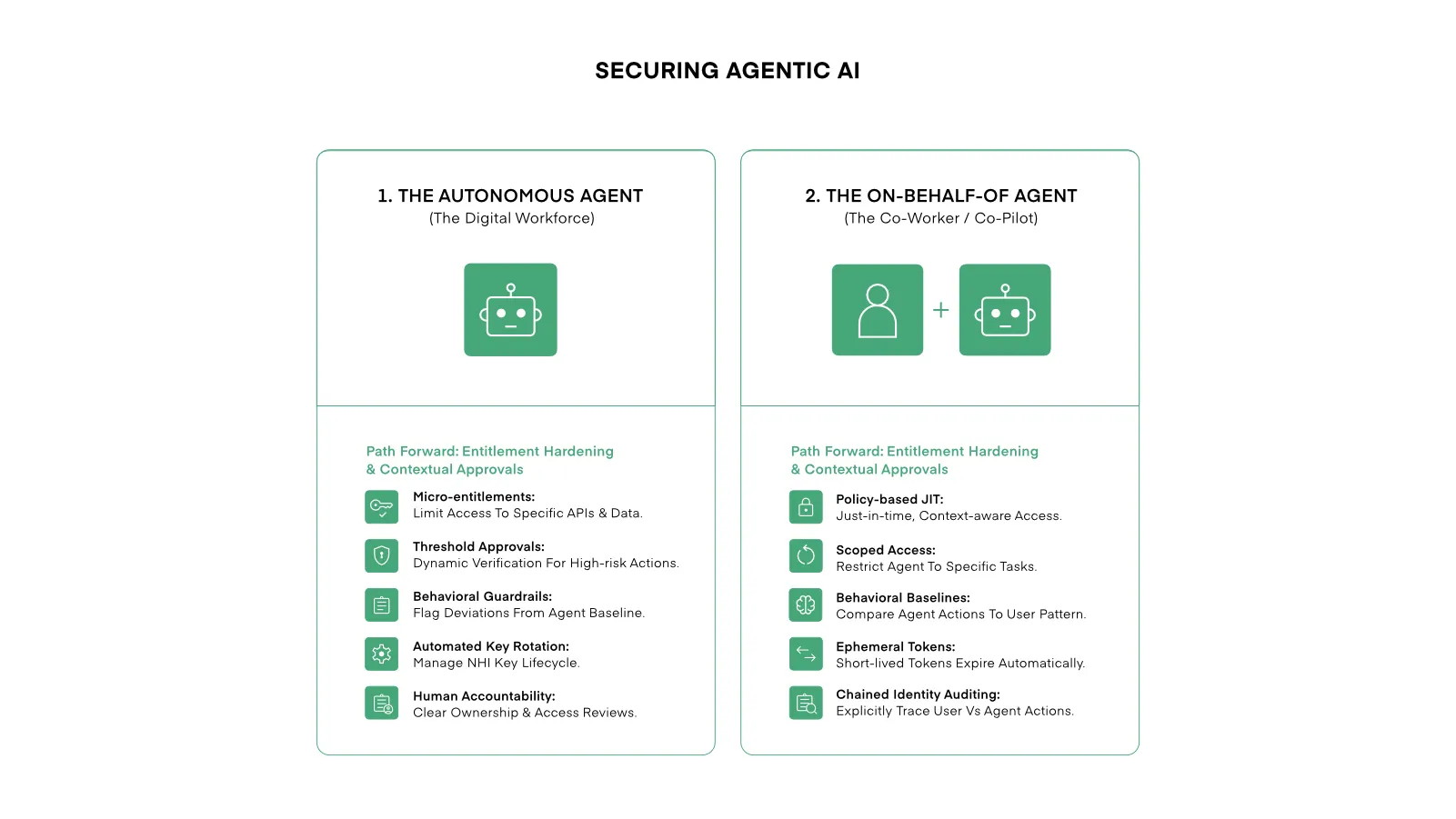

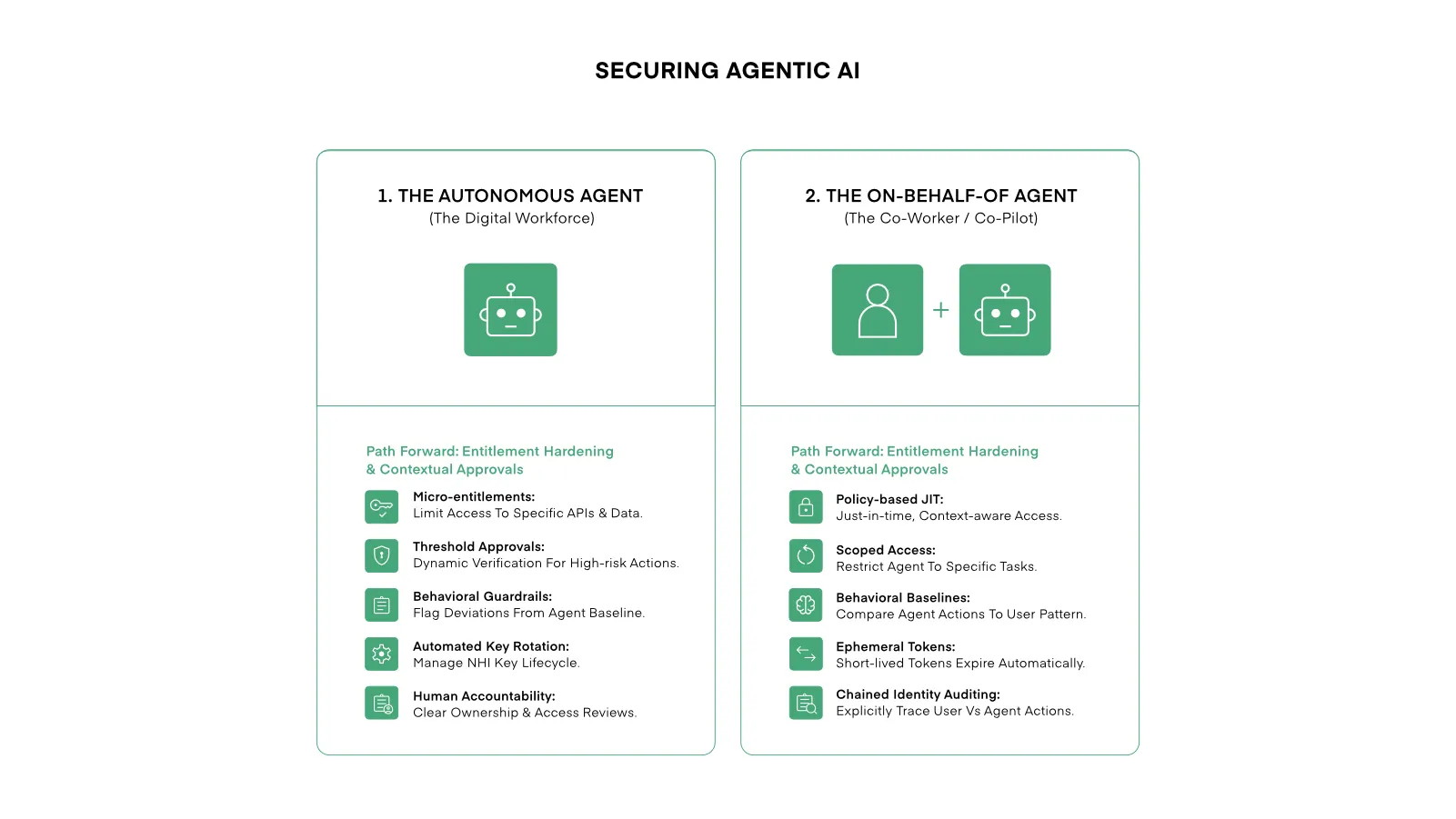

To scale safely, security teams must move from "all-or-nothing" access to a dual-track governance model tailored to the agent's role.

1. Autonomous Agents: Entitlement Hardening & Just-in-Time access

- Micro-Entitlements: Replace broad service roles with task-specific access. Agents should only reach the specific APIs and data required for their immediate mission.

- Contextual & Threshold Approvals: Implement human-in-the-loop guardrails for high-stakes actions or require the presence of a specific business event (e.g., an open support ticket) to validate the agent's activity before execution.

- Behavioral Guardrails: Establish activity baselines for every agent. If an agent deviates from its mission—such as a support bot suddenly attempting a bulk data export—the action is automatically flagged or blocked, regardless of its assigned permissions.

- Automated Key Lifecycle: Automate the rotation, vaulting, and revocation of the NHI keys used for application access. This removes the risk of "forever keys" and prevents manual management from becoming a security backdoor.

- Human Accountability: Map every agent to a human owner responsible for its lifecycle. These "digital subordinates" must be included in the owner’s regular access reviews and compliance certifications.

2. On-Behalf-Of Agents: Scoped Orchestration & Delegated Guardrails

- Policy-Based JIT Access: Replace standing access to AI assistants with Just-In-Time (JIT) provisioning. A user's ability to invoke an agent should be governed dynamically by contextual business policies, such as their current role or active projects.

- Scoped Access (Permission Intersection): Prevent the agent from fully mirroring an over-privileged human. Use scoped OAuth 2.0 tokens to restrict the agent to a narrow subset of the user’s permissions, limited only to the requirements of the specific task.

- Human-Centric Behavioral Baselines: Establish a baseline by learning a user’s typical behavioral patterns. This is then used to validate agent actions; operations outside the human's normal pattern are automatically flagged for verification.

- Ephemeral Tokens & Session Lifecycle: Eliminate persistent connections to user applications. Utilize short-lived, delegated tokens tied directly to the active session that automatically expire the moment the interaction concludes.

- Chained Identity Auditing: Maintain strict forensic visibility. Ensure audit logs clearly distinguish between an action performed manually by a human and an action executed by an agent acting on their behalf.

3. Hybrid Agents: Governing the "Identity Mashup"

- Unified Identity Mapping: Maintain a consolidated view of activity, linking actions taken via the agent’s NHI and delegated human tokens as a single, cohesive event.

- Downstream Propagation Limits: Prevent "Cascading" risks by enforcing strict depth limits on agent-to-agent delegation, ensuring sub-agents cannot inherit more permissions than the primary agent.

- Cross-Context Audit Trails: Capture the entire "chain of command" in logs, allowing security teams to trace a sub-agent's action back through the hybrid agent to the original human intent.

The AI revolution is moving beyond simple chatbots and entering the era of Agentic AI. Businesses are rapidly adopting AI agents that can act independently or on behalf of human users to solve complex problems and drive efficiency.

But with this innovation comes a critical question: How do we secure them? Over the last few months, we have met with more than 100 CISOs, security leaders, and Identity and Access Management (IAM) leaders to gather their perspectives on Agentic AI security. The consensus is clear: organizations are struggling to keep up, and security teams are deeply concerned about the risks posed by the rapid adoption of AI agents across business units.

The 4 Major Security Challenges of Agentic AI

Security leaders consistently pointed to four major hurdles they need to overcome to gain control::

- Visibility and "Shadow AI": Organizations don't know how many agents are currently being used. Questions remain about who the active users are, what use cases they serve, and where these agents are created—whether on personal devices, dedicated agent platforms, or elsewhere.

- Business Risk and Data Leakage: What applications, resources, and data do these agents have access to? There is a significant concern about data leakage, specifically about how much proprietary enterprise data is being fed into public LLMs. Leaders are looking for ways to block public LLM access and enforce the use of secure enterprise LLMs.

- Defining Policy Controls: Security teams need the ability to define strict policy controls—dictating exactly what an agent can access, and conversely, who is authorized to access and use a specific agent.

- Detecting Anomalous Behavior: Traditional threat detection is built for humans and standard software. Teams need new ways to monitor and detect anomalous, potentially malicious, agent behavior.

These concerns are consistent with the OWASP Top 10 for Agentic Applications.

The Two Faces of AI Agents: Deployment Models & Risks

Securing AI agents isn't a one-size-fits-all endeavor. There are two distinct deployment models for agents, and each comes with entirely different security and policy control requirements:

1. The Autonomous Agent (The Digital Workforce)

These agents perform independent functions. The most common example is an AI support gent that autonomously handles L1 and L2 support cases, only escalating to human L3 agents when necessary.

To function, this agent needs access to case management systems, documentation, and various other applications.

- The Security Challenge: A unique service account (Non-Human Identity or NHI) must be created in each application the agent interacts with. It is critical that these NHIs are granted only the required privileges. For example, data write access should be granted only when strictly necessary. Unlike a human user who generally sticks to their workflow, if you grant an AI agent broad access, it will natively explore and try to use all the access it has, which can lead to disastrous outcomes.

2. The On-Behalf Agent (The Co-Worker / Co-Pilot)

In this model, a human user leverages an agent to assist in their daily work. The agent uses the human user’s credentials to access applications such as Salesforce, Snowflake, or Slack. The user logs in, generates OAuth tokens for these apps, and grants them to the agent.

- The Security Challenge 1: The "Mirror" Problem (Over-Privilege): Because the agent is acting on behalf of the user, it is technically limited to the scope of that user's permissions. In theory, this provides clear guardrails. However, in most enterprises, the reality is that human users are drastically over-privileged. While ethical human users generally stick to their established workflows, an AI agent will actively explore the boundaries of those privileges to solve a problem—finding and utilizing "accidental" access that the human may not even know they have.

- Security Challenge 2: Credential Persistence & Lifecycle Gap Agents require secrets (NHI keys or API tokens) to maintain these "on-behalf-of" connections. These secrets are often long-lived and manually managed.

- The Risk: If these keys are hardcoded or stored in insecure vaults to avoid "breaking" the agent, they become permanent backdoors.

- The Lifecycle Problem: When a project ends, or an agent is "retired," the keys often remain active. Organizations struggle to rotate or revoke these keys because they fear disrupting the agent’s complex logic or causing downtime.

3. The Hybrid Agent (The Multi-Modal Workflow)

In complex enterprise environments, agents often operate in a hybrid capacity, combining autonomous capabilities with delegated human authority to complete end-to-end business processes.

A hybrid agent uses its own NHI identity to access core systems (like a database or CRM) while simultaneously using human tokens to act on behalf of a user in communication or productivity tools (like email or Slack).

- The Security Challenge 1: The Identity "Mashup": Because these agents juggle both their own service accounts and user-delegated tokens, they often fall into a "governance gap." Security teams struggle to determine if an action was performed by the agent's core identity or via the user's delegated session, making it nearly impossible to enforce a consistent security policy or baseline.

- The Security Challenge 2: Agentic Cascading: Hybrid agents often trigger "sub-agents" to complete specialized tasks. This creates a chain of delegated authority—a cascading effect—where the original human prompt ripples through multiple layers of identities and permissions. If one agent in the chain is compromised or over-privileged, the entire workflow becomes a high-speed conduit for unauthorized data access or lateral movement that is invisible to traditional monitoring tools.

The Path Forward: Securing the Agentic Workforce

To scale safely, security teams must move from "all-or-nothing" access to a dual-track governance model tailored to the agent's role.

1. Autonomous Agents: Entitlement Hardening & Just-in-Time access

- Micro-Entitlements: Replace broad service roles with task-specific access. Agents should only reach the specific APIs and data required for their immediate mission.

- Contextual & Threshold Approvals: Implement human-in-the-loop guardrails for high-stakes actions or require the presence of a specific business event (e.g., an open support ticket) to validate the agent's activity before execution.

- Behavioral Guardrails: Establish activity baselines for every agent. If an agent deviates from its mission—such as a support bot suddenly attempting a bulk data export—the action is automatically flagged or blocked, regardless of its assigned permissions.

- Automated Key Lifecycle: Automate the rotation, vaulting, and revocation of the NHI keys used for application access. This removes the risk of "forever keys" and prevents manual management from becoming a security backdoor.

- Human Accountability: Map every agent to a human owner responsible for its lifecycle. These "digital subordinates" must be included in the owner’s regular access reviews and compliance certifications.

2. On-Behalf-Of Agents: Scoped Orchestration & Delegated Guardrails

- Policy-Based JIT Access: Replace standing access to AI assistants with Just-In-Time (JIT) provisioning. A user's ability to invoke an agent should be governed dynamically by contextual business policies, such as their current role or active projects.

- Scoped Access (Permission Intersection): Prevent the agent from fully mirroring an over-privileged human. Use scoped OAuth 2.0 tokens to restrict the agent to a narrow subset of the user’s permissions, limited only to the requirements of the specific task.

- Human-Centric Behavioral Baselines: Establish a baseline by learning a user’s typical behavioral patterns. This is then used to validate agent actions; operations outside the human's normal pattern are automatically flagged for verification.

- Ephemeral Tokens & Session Lifecycle: Eliminate persistent connections to user applications. Utilize short-lived, delegated tokens tied directly to the active session that automatically expire the moment the interaction concludes.

- Chained Identity Auditing: Maintain strict forensic visibility. Ensure audit logs clearly distinguish between an action performed manually by a human and an action executed by an agent acting on their behalf.

3. Hybrid Agents: Governing the "Identity Mashup"

- Unified Identity Mapping: Maintain a consolidated view of activity, linking actions taken via the agent’s NHI and delegated human tokens as a single, cohesive event.

- Downstream Propagation Limits: Prevent "Cascading" risks by enforcing strict depth limits on agent-to-agent delegation, ensuring sub-agents cannot inherit more permissions than the primary agent.

- Cross-Context Audit Trails: Capture the entire "chain of command" in logs, allowing security teams to trace a sub-agent's action back through the hybrid agent to the original human intent.