AI Agent Security: The application perimeter

.webp)

Background

The AI agent conversation has moved fast. In the span of eighteen months we went from “chat with your documents” to autonomous agents that book meetings, triage incidents, ship code, and approve purchase orders, chaining numerous tool calls in the process.

The governance layer underneath needs to evolve fast to keep your organization safe.

Agent execution challenges

A single agent can act on behalf of dozens of users in a single minute. It can chain tool calls across applications that were never designed to trust each other. It can escalate its own capabilities by discovering new endpoints at runtime. And it operates at a speed that makes reactive tools not good enough.

Locking down agent execution means limiting capabilities of the agent thereby not leveraging the true benefit an agent is used for in the first place.

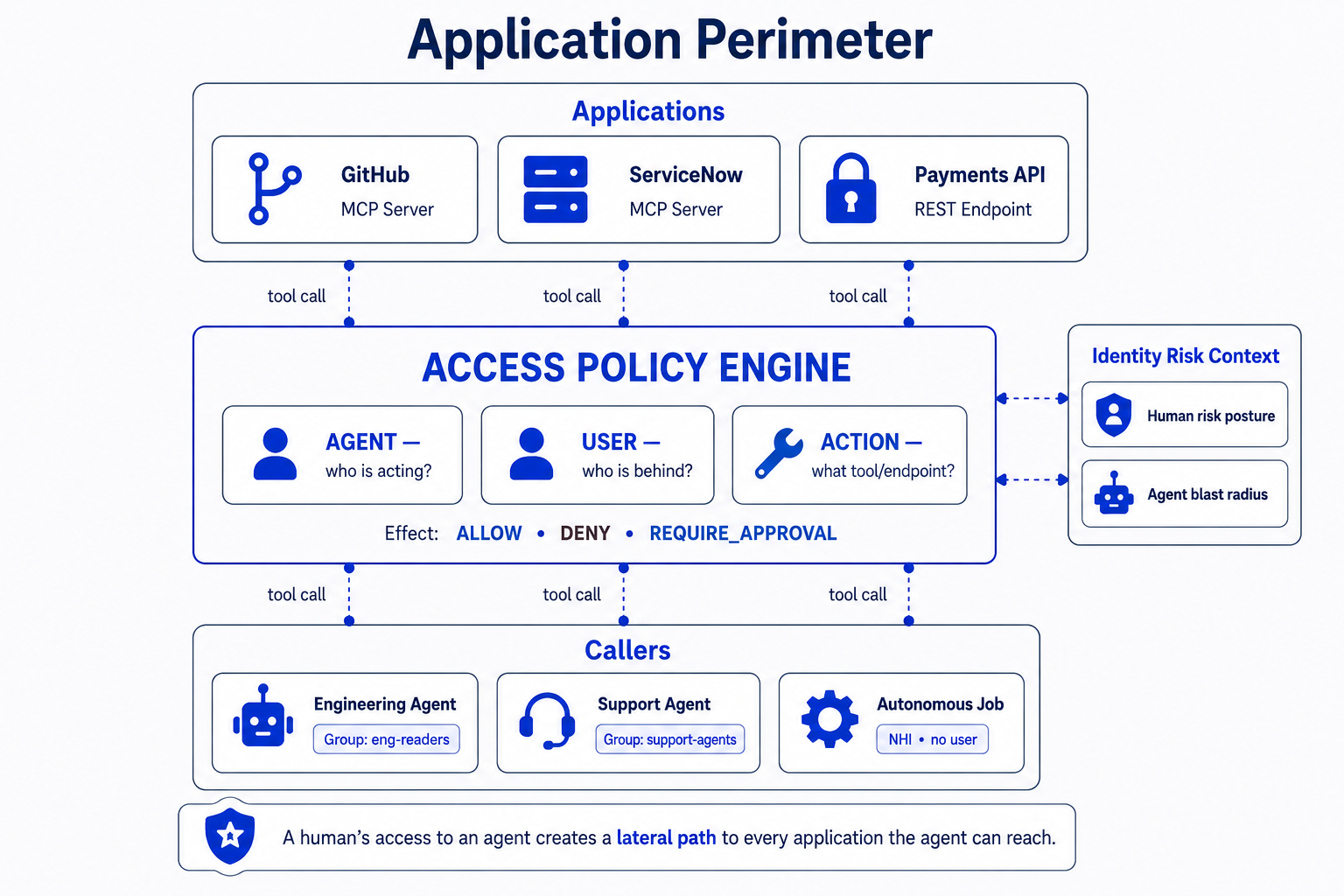

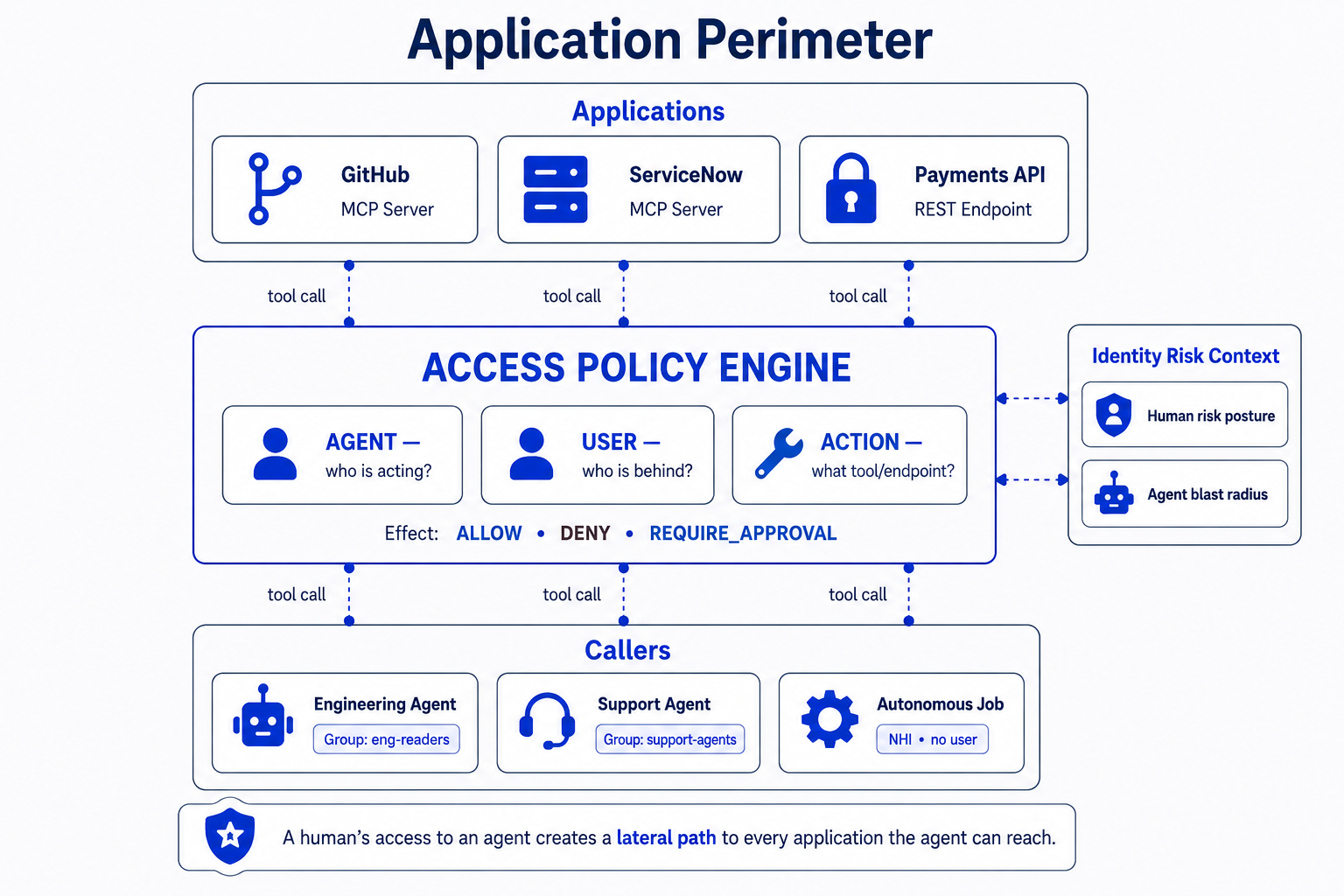

The triad that actually matters

If we assume a human responsible for an agent then it forms a triad always:

- Who is the human user/sponsor this agent?

- Which agent is acting?

- What is it trying to do?

All three must be evaluated together, in real time, before the call goes through. Evaluate any two without the third and you’ve got a gap. This is the access evaluation triad, and it’s the primitive that agentic governance needs to be built on.

Define the application perimeter

In the pre-agent world, application usage was predictable. In the agentic world, applications are attack surfaces. Each one exposes a set of callable tools that agents can discover, chain, and invoke autonomously there by increasing the likelihood of data theft and damage.

Protecting critical applications from being accessed by agent is an interesting way of looking at the problem instead of trying to lock down every agent.

In some sense, all one needs to do is ensure that their critical data is not misused by agents through unintended behavior.

The application perimeter marries into the identity’s risk and entitlements to truly succeed.

Policy composition is key

Agents are predicted to be huge in scale in comparison to human identities. In such a case, the governance driven using policies need to be composable such that it can easily express the triad (human, agent, action) in a re-usable manner. Policies can govern if a tool call should be allowed perpetually or should that always go through an approval process.

This gives rise to the requirement of a policy engine that is specifically used to govern critical business applications.

The humans don’t disappear — they move up

There’s a persistent misconception that agents replace human judgment. In practice, agents shift where human judgment is applied. Instead of a person clicking the button, a person decides which agents are allowed to click which buttons, under what circumstances. But the relationship is bidirectional — a human’s access to an agent implicitly grants them a lateral path to every application that agent can reach, this increases the human risk that aren’t truly derived by direct entitlement grants. Conversely, the rules governing what an agent can do on an application should be informed by the risk posture of the humans behind it. For example, a contractor on a managed device in the finance department is a different trust context than a full-time engineer on an unmanaged laptop. Human security, NHI security, and agent security aren’t three separate programs — they’re one program evaluated at three different layers of the same call chain. The winning abstraction is one where the human operates at the level of intent and the system compiles that intent into enforceable, auditable, real-time decisions at the tool-call layer, drawing on the identity graph of both the human and the agent to do so.

What comes next

The agentic governance stack is still early. However, the shape of the solution is becoming clear:

- Application-centric onboarding that treats every app as a governed tool surface.

- Compositional policy that evaluates the full (human, agent, action) triad instead of flattening it into legacy role assignments.

- Group-level reasoning that lets administrators think in populations and intent, not individual agent wiring.

At andromeda we are building a solution following these guiding principles that we believe will help our enterprises bring governance to their agentic workloads without hampering productivity.

Background

The AI agent conversation has moved fast. In the span of eighteen months we went from “chat with your documents” to autonomous agents that book meetings, triage incidents, ship code, and approve purchase orders, chaining numerous tool calls in the process.

The governance layer underneath needs to evolve fast to keep your organization safe.

Agent execution challenges

A single agent can act on behalf of dozens of users in a single minute. It can chain tool calls across applications that were never designed to trust each other. It can escalate its own capabilities by discovering new endpoints at runtime. And it operates at a speed that makes reactive tools not good enough.

Locking down agent execution means limiting capabilities of the agent thereby not leveraging the true benefit an agent is used for in the first place.

The triad that actually matters

If we assume a human responsible for an agent then it forms a triad always:

- Who is the human user/sponsor this agent?

- Which agent is acting?

- What is it trying to do?

All three must be evaluated together, in real time, before the call goes through. Evaluate any two without the third and you’ve got a gap. This is the access evaluation triad, and it’s the primitive that agentic governance needs to be built on.

Define the application perimeter

In the pre-agent world, application usage was predictable. In the agentic world, applications are attack surfaces. Each one exposes a set of callable tools that agents can discover, chain, and invoke autonomously there by increasing the likelihood of data theft and damage.

Protecting critical applications from being accessed by agent is an interesting way of looking at the problem instead of trying to lock down every agent.

In some sense, all one needs to do is ensure that their critical data is not misused by agents through unintended behavior.

The application perimeter marries into the identity’s risk and entitlements to truly succeed.

Policy composition is key

Agents are predicted to be huge in scale in comparison to human identities. In such a case, the governance driven using policies need to be composable such that it can easily express the triad (human, agent, action) in a re-usable manner. Policies can govern if a tool call should be allowed perpetually or should that always go through an approval process.

This gives rise to the requirement of a policy engine that is specifically used to govern critical business applications.

The humans don’t disappear — they move up

There’s a persistent misconception that agents replace human judgment. In practice, agents shift where human judgment is applied. Instead of a person clicking the button, a person decides which agents are allowed to click which buttons, under what circumstances. But the relationship is bidirectional — a human’s access to an agent implicitly grants them a lateral path to every application that agent can reach, this increases the human risk that aren’t truly derived by direct entitlement grants. Conversely, the rules governing what an agent can do on an application should be informed by the risk posture of the humans behind it. For example, a contractor on a managed device in the finance department is a different trust context than a full-time engineer on an unmanaged laptop. Human security, NHI security, and agent security aren’t three separate programs — they’re one program evaluated at three different layers of the same call chain. The winning abstraction is one where the human operates at the level of intent and the system compiles that intent into enforceable, auditable, real-time decisions at the tool-call layer, drawing on the identity graph of both the human and the agent to do so.

What comes next

The agentic governance stack is still early. However, the shape of the solution is becoming clear:

- Application-centric onboarding that treats every app as a governed tool surface.

- Compositional policy that evaluates the full (human, agent, action) triad instead of flattening it into legacy role assignments.

- Group-level reasoning that lets administrators think in populations and intent, not individual agent wiring.

At andromeda we are building a solution following these guiding principles that we believe will help our enterprises bring governance to their agentic workloads without hampering productivity.